It is a widely held view that the term ‘alarmist’ is a discriminatory label that is every bit as divisive and dismissive as ‘denier’. This would, of course, be a convenient view for an alarmist to hold since it provides a pretext for rejecting the label, thereby avoiding discussion of the term’s legitimate application. In fact, fussing over the use of supposedly essentialist labels that support tribalism is a good way of neatly evading two inconvenient truths. Firstly, being accused of holding an unjustified perception of high risk (alarmism) is nowhere near as pathologizing as being accused of an inability to accept self-evident facts (denialism). The former accusation simply questions an individual’s judgment and challenges the accused to produce a better justification; the latter is used to disqualify the accused from further debate since the acceptance of self-evident facts by all parties is a prerequisite for rational argument. Secondly, alarmism can be a worrying consequence of a number of procedural and methodological strategies and errors, and these need to be acknowledged and assessed. Carping about supposedly pejorative terms, and using a taken offence as an excuse to walk away from the discussion leaves such issues unexplored.

Well, today I want to explore those issues. In so doing, I will demonstrate that, far from being a pathologizing slur, the epithet of alarmist is often a perfectly legitimate and technically correct label that helps illuminate the debate rather than close it down. So, without further delay, here are the various technical considerations that can form the basis for pathology-free accusations of alarmism.

The Signal Detection Theory (SDT) perspective

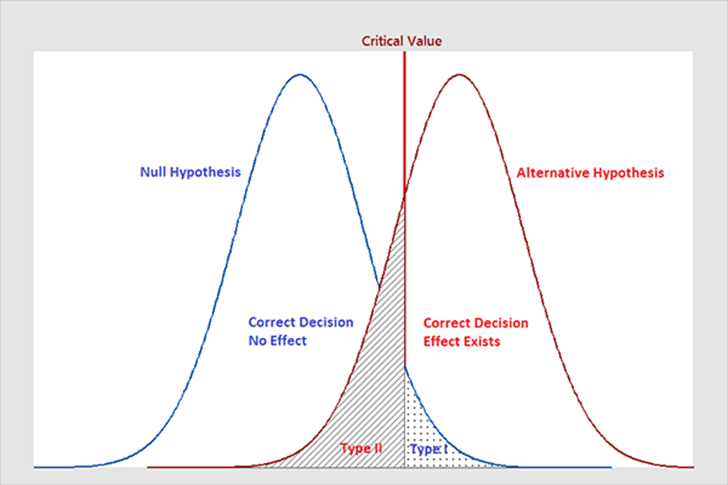

SDT is relevant to the study of alarmism because it provides a formal basis for determining an individual’s propensity to distinguish a significant signal from background noise. As such, it is a branch of psychology that connects with decision theory by drawing a distinction between a tendency to make false alarms (alarmism) and a tendency to overlook important indicators (complacency). The classic SDT diagram that illustrates the distinction is shown in Fig 1.

Fig 1. In a threat scenario, Type 1 errors are precautionary false alarms and Type 2 errors are failures to detect the threat.

Fig 1 shows two overlapping probability distributions, one for the noise (the null hypothesis) and the other for the signal (the alternative hypothesis). A ‘critical value’ has been set to define the threshold demarking which hypothesis is accepted. This is a useful representation because it highlights an important point: for any given choice of ‘critical value’, there are two basic categories of error that can be made.

The first is the so-called Type I error, indicated by the area under the null hypothesis curve to the right of the critical value. This represents the probability that noise could be misread as signal, thereby causing the alternative hypothesis to be accepted in error. The second is the Type II error, indicated by the area under the alternative hypothesis curve to the left of the threshold. This represents the probability that a signal could be misread as noise, thereby causing the alternative hypothesis to be rejected in error. To reduce the likelihood of Type I errors one can readjust the critical value used to delineate statistical significance, but that will only increase the likelihood of Type II errors.

Knowing where to place the critical value is a subjective value judgment made by the decision-maker. If one applies utility theory to account for the costs of the Type I and Type II errors, one can formulate the choice (β) using the following equation:

β = ((TCR – FA) x prob(noise)) / ((TCD – TM) x prob(signal))

Where:

• TCD is the value of a threat correctly detected

• TCR is the value of threat correctly rejected

• TM is the cost of a threat missed

• FA is the cost of a false alarm

It is important to emphasise the subjectivity involved here. A β calculation that leads to more Type I errors than Type II errors may give the appearance of alarmism. However, with different value and cost judgments in mind, the same decisions may just represent precautionary rationality. So, a true alarmist has to be someone who has used invalid TCD, TCR, TM, FA or probability values to arrive at a Type I error preference.

Would such an accusation of alarmism be pathologizing? Certainly not. Even if incorrect TCD, TCR, TM, FA or probability values are being used, there is no suggestion that this is the result of a ‘broken’ psychological state; it would just suggest a miscalculation*. Indeed, the tendency to perceive significant patterns where there is only noise is a well-understood human trait called apophenia. In circumstances where the signal represents a threat, apophenia naturally aligns with alarmism, and for good reason. Also, keep in mind that a β calculation is made with respect to a particular decision-making scenario. There is nothing to suggest that the individual who favours an alarm-biased setting for one such scenario would automatically do the same in a different one.

That said, there are people who are ideologically or morally opposed to Type II errors compared to Type I. Naomi Oreskes is one such individual, since she has called out scientists for being far too cautious in avoiding false alarms. For her, Type I errors are a moral preference. By any definition of the term, Oreskes is an alarmist.

The risk aversion perspective

Most people have a tendency to be risk averse. Technically expressed, this means that our Certainty Equivalent (CE) is less than the Expected Value (EV) of a risky prospect. In plain language, we would be prepared to accept a guaranteed pay-off of £45 rather than take a 50-50 gamble that pays off either £100 or nothing. That is because we usually like to know where we stand regarding the future and are prepared to pay a dividend for that privilege. It’s also part of the reason why we are prepared to pay a known insurance premium rather than take our chances.

The explanation for risk aversion is given by Utility Theory. The CE is found by solving for the wealth level (W) where the utility of the certain wealth equals the expected utility of the risky prospect, i.e. U(CE) = E[U(W)]. For a risk-averse individual the utility function U is concave, so the utility of the average outcome is always higher than the average utility of the outcomes, which forces the CE to be lower than the EV.

The concave nature of the average person’s utility function not only explains their risk aversion, it also provides the basis for understanding alarmism, i.e. an exaggerated or excessive reaction to a potential risk. This understanding is provided by an extension to Utility Theory called Prospect Theory, in which the standard concave utility curve is extended into an S-shape that defines two domains: one for gains and one for losses. The curve marking out the loss domain is steeper than that for the gain domain, indicating that people feel losses much more sharply than they do an equivalent gain. In the case of alarmism, the curve is so steep for losses, that even a small potential negative event can trigger an outsized emotional and behavioural response, leading individuals to over-insure or react with alarm to minor risks.

None of this pathologizes the alarmist, since risk aversion is a perfectly normal human trait, as indeed is the loss aversion proposed by Prospect Theory. The alarmist is not marked out by having a concave utility function, but for having one with a particular degree of curvature, as measured by the Arrow-Pratt measure of risk aversion. As with the choice of β in Signal Detection Theory, the degree of curvature is subjective and may have a perfectly rational basis as far as the individual is concerned. That said, because we tend to overweight the significance of rare, ‘catastrophic’ events while underweighting highly likely but less severe ones, we sometimes lose sight of the probabilities altogether. This problem is particularly acute at the low end of the probability scale, leading to an assumption of ‘fat tail’ risks.

Probability blindness and storylines

Probability blindness (also known as Neglect of Probability) is a cognitive bias in which the impact of a risk is concentrated upon, to the detriment of any consideration of likelihood. As explained above, this can be particularly prevalent when dealing with low probability, high impact risks, in which an emotional reaction to a vivid scenario can be accompanied by probabilities that are very difficult to calculate. Probability blindness is a primary driver of alarmism because it causes rare and unlikely risks to be viewed as imminent, certain threats. Whereas the low probability should serve to moderate concerns, plausibility starts to take on the prominent role, thereby rendering impact the only risk factor to be considered.

Viewed properly, probability blindness should be seen as a likely cause of a flawed risk assessment. However, within the field of climate risk assessment, there has been a growing trend to abandon assessments based upon risk calculations in preference for assessments based purely upon scenarios supported by physically plausible storylines. There are a number of reasons behind this development, but the primary motivator seems to be that the ‘true’ risk is deemed to reside within the ‘fat tails’, and avoiding the tricky job of calculating what are likely to be unreliable probabilities helps to focus upon the most important issues. Concern that this may result in Type I errors is dismissed by recourse to the moral argument introduced above: better to make a Type I error than a Type II because it is better to be safe than sorry.

I have nothing against using storylines as an important precursor to performing a risk calculation and, if the latter is not feasible, I have nothing against accepting the storyline as the best we have to inform policy. However, let it not be said that a technique that makes no effort to calculate probabilities is a technique for calculating risk. Consequently, let it not be said that the resulting policy is risk-based. In fact, whereas the potential hazards described by the storyline may be well-understood, the risks are still unknown, and developing a policy based upon an aversion to unknown risk is a recipe for alarmism. The reason for this lies in the concept of ambiguity aversion.

Ambiguity aversion

So far, I have spoken about risk and the uncertainty upon which it is predicated, but I haven’t said anything regarding the nature of the uncertainty and how that might influence the perception of the risk. So, it is at this stage that I need to explain Ellsberg’s Paradox and the concept of ambiguity aversion.

When Ellsberg introduced his paradox, he illustrated it using a thought experiment in which an individual was invited to choose between gambles that involved blindly picking coloured balls out of a jar. The set-ups were designed such that each choice involved gambles with different components of epistemic uncertainty, i.e. for one option, a knowledge of the numbers of varying colours enabled the probabilities to be objectively calculated, whilst for the other option a lack of such knowledge prevented such a calculation. In the absence of an objective basis for calculating the odds, the individual would have to resort to subjective probabilities. Although originally a thought experiment, subsequent trials have repeatedly confirmed that individuals prefer the gamble that enables them to objectively calculate the probabilities, even when the logically derived subjective probabilities were equivalent. The two gambles would involve risk and uncertainty of the same scale but when the gamble involved epistemic uncertainty (as opposed to aleatory) the risk was deemed to be greater and the gamble was avoided.

A number of possible explanations have been offered for this paradox, including explanations that suggest that social pressure or distrust of the set-up are factors. However, by far the most obvious explanation lies in the working of the brain. For your average brain, not knowing the odds objectively is not dealt with by the logic centres of the prefrontal cortex. Instead, it is processed in the amygdala* and treated as ‘that predator in the tall grass’. You don’t know if the predator is there, but the alarm triggers anyway because the cost of being wrong is too high. In this case, alarmism is simply the cognitive response to a pulse of fear. There may not be any tigers in the long grass when you’re picking balls out of a jar, but the brain is working as evolution has taught it to work, and this manifests as a subconscious desire to avoid the unknown risk.

The main point to take away here is that the alarmism (heightened perception of risk) that results from this brain mechanism is universal. Despite the fact that it leads to irrational behaviour, it is not a pathology but a sign of a brain working as it is designed to. We are all ambiguity averse and neuroimaging studies have confirmed this. Je suis alarmiste!

Weaponizing uncertainty

Given that the climate change debate revolves around issues of decision-making under uncertainty, it is unsurprising that those on both sides of the argument have conflicting views regarding uncertainty’s true significance. It is often said that uncertainty is not the sceptic’s friend, but the truth is that it favours no one, and it is used as a weapon whichever hand it is in.

In the uncertainty wars, Stephan Lewandowsky has emerged as a particularly belligerent combatant. Not only does he harshly criticise those who wish to see a reduction in epistemic uncertainty before taking action (he accuses them of using a SCAM – Scientific Certainty Argumentation Method) he also purports to have a mathematical proof that higher uncertainty invariably makes the case for the climate activists.

Lewandowsky’s ‘proof’ derives from Jensen’s Inequality. It asserts that because climate damage costs are convex (accelerating as temperatures rise), the expected cost of an uncertain future is strictly higher than that of a certain average one. Stated formally, if the damage function d(x) is convex, then for any random variable x, it follows that E[d(x)] >= d(E[x]), where E is the expected value. He therefore draws the conclusion that high levels of uncertainty are not an excuse to wait for more clarity but a cause for greater concern and more urgent action. He refers to this as ‘Actionable Uncertainty’ or ‘Uncertainty as Knowledge’.

Ultimately, while his maths is internally consistent, it relies, however, upon the philosophical assumption that subjective uncertainty can be treated with the same mathematical approach as physical variability, i.e. he is using a mathematical proof that works perfectly well for aleatory uncertainty but fails when the epistemic components start to dominate. His failure is to have not appreciated that in the real world there is always ambiguity and so we can have uncertainty about our uncertainty. If our estimate of the risk is itself unreliable, the mathematical necessity of his conclusion disappears. So, to make his maths work, he treats the space of possible distributions as something we can sample: he assumes we can construct a Probability Distribution of Probability Distributions. But this is an unsound assumption (as I have said before, modelling is not measurement). His concept of ‘Uncertainty as Knowledge’ sounds like a category error because that is exactly what it is. At the end of the day, you cannot perform a mathematical operation on a lack of information to produce more certain information.

So, in conclusion, if you accept the Bayesian axiom that all uncertainty must be representable as a PDF for a rational agent, then his proof is sound. But if you believe deep uncertainty (ambiguity) can violate this axiom, then his proof is a sleight of hand. It uses the language of mathematics to turn a “we don’t know” into a “we are certain it is dangerous”. At its heart, his position is ambiguity aversion, not risk aversion. And that makes his argument just as much alarmist as it is precautionary.

Issues of ergodicity

An assumption that lies at the heart of much of risk management is that the ensemble average will equal the time average. For example, the average of throwing 1000 dice simultaneously should be the same as the average produced by a single die after 1000 throws. Such a situation is referred to as ‘ergodic’. However, life often isn’t like that. One often encounters situations where a single catastrophic failure (ruin or extinction) ends the possibility of future participation in the ‘game’, making time average statistics irrelevant. This is known as a non-ergodic system, and those who are particularly concerned regarding tipping points and their potentially catastrophic outcomes have used this as an argument against conventional, probability-based risk calculations. Indeed, Joachim Schellnhuber, a prominent promoter of the dangers of tipping points, has accused the IPCC of a ‘probability obsession’. Furthermore, the fear of tipping points lies behind his precautionary advocation of the 2.0C (now revised to 1.5C) limit beyond which global warming becomes potentially catastrophic. There are no probability calculations behind the setting of such limits.

From the perspective of someone who believes the risk of tipping points to be exaggerated, individuals such as Schellnhuber, who are stressing the non-ergodic nature of climate change risk, might appear to be alarmist. To the extent that Schellnhuber bases his thoughts on sound scientific ideas that are shared by the scientific community, such accusations would be unfounded. However, it is important to examine exactly what Schellnhuber said on this subject when providing the forward to a think tank report titled, ‘What Lies Beneath’:

Experts tend to establish a peer world-view which becomes ever more rigid and focussed. Yet the critical insights regarding the issue in question may lurk at the fringes, as this report suggests.

Indeed, Schellnhuber goes on to advocate a focus upon the non-expert or fringe viewpoint precisely because the experts have failed to deliver enough ‘drama’ in the past:

After delivering five fully-fledged assessment reports, it is hardly surprising that a trend towards “erring on the side of least drama” has emerged.

Having bemoaned a lack of drama, he concludes with a stated position that looks awfully like probability blindness:

So, calculating probabilities makes little sense in the most critical instances…Rather we should identify possibilities, that is, potential developments in the planetary makeup that are consistent with the initial and boundary conditions, the processes and the drivers we know.

At the end of the day, and despite his obvious scientific credentials, Schellnhuber sits side-by-side with militant activists such as Rodger Hallam, co-founder of Extinction Rebellion, who said:

The rapid heating and extreme events of the last year demonstrate that overall predictions of institutionalised climate science were less accurate than the conclusions of generalist scholars and leading climate activists, who better saw the frightening signals through the noise produced from siloes, hierarchies, and privilege.

There is nothing inherently alarmist about concerns over non-ergodicity, but when this concern is voiced by someone who has turned his back on the institutionalised scientific community because it has erred on the side of least drama, ‘alarmist’ starts to look like the most appropriate descriptor.

Over-analysis

During my professional experience of conducting risk analyses, one particular rule became abundantly apparent: the longer one took studying potential risk, and the deeper one looked into it, the more serious and alarming the situation would seem. In road traffic safety, this phenomenon had a name: the school bus, nuclear convoy scenario. No matter the situation being assessed, one could almost guarantee that enough people working on it for long enough would eventually identify the worst-case scenario as being a school bus crashing into a convoy carrying radioactive materials. And once this scenario had been identified, it came to dominate the risk profile, resulting in over-engineered safety measures.

In general terms, over-analysis provides the road to alarmism by focusing intensely on worst-case scenarios and theoretical risks to create a narrative of looming catastrophe. While over-analysis generally suggests a deep and thorough study, in the context of climate change it often leads to an overemphasis on negative outcomes while ignoring uncertainty, natural variability, or potential adaptation strategies.

But quite apart from the fixation on worst-case scenarios, over-analysis within academia has also resulted in such a bewildering plethora of concerns related to climate change that one must surely regret the amount of time and grant money invested. Everything from shrinking penises to the thickening of whale earwax has been linked to climate change. And I do mean everything. The real challenge is to find something alarming that it hasn’t caused. Which brings me nicely to my next concern.

The half-cut causal analysis

Causal analysis plays a huge role in the understanding and communication of risks such as those encountered in climate science. However, when such analysis is performed, it is essential that all aspects of causality are considered. Focusing upon one factor, particularly when it is the only factor that is routinely quantified, can lead to a distorted and often exaggerated perception of risk.

This distortion can be explained in terms of necessary and sufficient cause. A necessary cause is a precondition or event that has to feature in order for the resulting effect to be present. A sufficient cause is a precondition or event that guarantees the effect. Alarmism often grows when the necessary is treated as being the sufficient, or when a single sufficient cause is examined in isolation. By concentrating upon a particular necessary cause, one can develop the view that it is of singular importance. Yes, its presence may dramatically heighten the risk, but that has to be assessed in comparison with the other causal factors. Only by fully analysing (preferably by quantifying) the full necessity and sufficiency of causes can one understand where the primary alarm should be felt, and how strong that feeling should be.

A fine example of this misplaced focus can be found in the extreme weather event attribution studies as they relate to wildfire risk. A great deal of interest has been shown in analysing and quantifying the necessity of climate change to understand recent trends in fire risk (the Fraction of Attributable Risk calculated in an attribution study is equivalent to the probability of necessity used in causal analysis). The resulting claims that fire weather risk has significantly increased has invariably caused alarm – as it was meant to. And yet, despite acknowledging other factors such as trends in arson, forest management, the growth of forest/conurbation boundaries, and reductions in fire detection and management resources, their relative impact is rarely, if ever, quantified. On this subject, climate scientist Patrick Brown of the Breakthrough Institute has said:

To put it bluntly, climate science has become less about understanding the complexities of the world and more about serving as a kind of Cassandra, urgently warning the public about the dangers of climate change. However understandable this instinct may be, it distorts a great deal of climate science research, misinforms the public, and most importantly, makes practical solutions more difficult to achieve.

Similar focus can be found in studies of recent flooding in the Asian subcontinent. Attribution studies will tell you how much the probability of necessity due to anthropogenic climate change influences the risk, but little effort is made to quantify just how much the increased flooding can be put down to other significant factors such as deforestation.

Localised urban subsidence, as compared to global sea level rise, is another case in point. The tendency is to see the subsidence as a problem that exacerbates the impact of the latter, when the numbers would seem to suggest that the reverse is true. The primary role afforded to climate change is an artefact of treating its necessity as if it were a sufficiency.

Exploiting behavioural insights

So far, I have focused upon technical issues that can lead to an unjustified perception of heightened risk. In all cases, the accusation of alarmism stems from either methodological strategies and errors, or commonly-held cognitive biases; no pathologizing of the individual is involved. The other side to the alarmist coin, however, is the communication of information that is deliberately designed to create anxiety. Once again, whilst this tactic may seem cynical, there is no question of the term ‘alarmist’ being used here to pathologize. Such a communication strategy usually forms part of a highly rational and politically motivated campaign of persuasion, usually bolstered by the insights of behavioural science.

When it comes to encouraging society to take a risk seriously it matters to the authorities that you be afraid – very afraid. We saw this, for example, during the Covid-19 pandemic, when David Halpern, founder of the UK’s Behavioural Insights Team (aka the ‘Nudge Unit’) openly talked of ‘recalibrating’ the public’s level of fear in order that it matched the seriousness of the threat posed. Similarly, the IPCC has spoken of using ‘social cognitive theory to develop a model of climate advocacy to increase the attention given to climate change in the spirit of social amplification of risk’.

Depending upon the circumstances, such techniques may seem well-intended and justified. If society is insufficiently alarmed with regard to a particular threat, then it might seem unfair to label those who seek to address this shortfall as ‘alarmists’, even though promoting alarm is their stated purpose. However, there are those, including myself, who are uncomfortable with the idea of an intellectual elite deciding what is good for the masses and then using techniques of persuasion designed to appeal directly to the reptile brain in order to encourage compliance with policy. Such tactics are effective because they exploit the Affect Heuristic, in which negative emotion causes the individual to perceive the risk as being higher. Yes, it is very effective, but that does not make it right.

Furthermore, using fear as a tool of persuasion is not without its unwanted side-effects. Eco-anxiety is now recognised as a significant mental health concern, particular amongst children. We are supposed to believe that those with such anxiety are responding directly to an objective level of threat as it has been carefully explained to them. The reality is that they are responding to politicians and activists using alarming hyperbole, and relentless newspaper and television articles designed to keep the fear going. You can’t even relax in front of your favourite soap opera nowadays, as you are drip-fed with storylines in which actors willingly act out the climate angst you are supposed to be experiencing for yourself. It is indeed the social amplification of risk.

My final word about that word

If you have made it this far you will understand that I consider alarm to be healthy as long as it is appropriate. Unfortunately, there are cognitive issues and potential methodological errors to be considered, each of which provides an avenue to inappropriateness. But whether it be a tendency to sound too many false alarms by reading too much into a noisy signal, being overly sensitised to loss, being naturally predisposed to treating ambiguity as risk, or believing that being blind to probability is the best way to discern ‘true’ risk, there is no question of an individual being pathologized by being accused of alarmism. Knowing the right level of alarm to adopt is a matter of judgment. It is highly subjective and very human. There should always be scope for discussing such judgments, and raising the possibility of alarmism is the starting point for such discussion, not the end.

———

*The same should be true, of course, for an unduly low value for β. However, the term that psychologists have invented for this phenomenon is the ‘Slothful Induction Fallacy’. Sloth is a sin and a fallacy is at best a foolish idea and at worst a deception. So, I don’t think that those at the other end of the spectrum should be complaining about being called an alarmist.

**To be precise, it is processed in the amygdala, dorsolateral prefrontal cortex (dlPFC), inferior parietal lobe (IPL) and right anterior insula. Ambiguity triggers higher activity in these regions compared to risk, with specific activation of the striatum and ventromedial prefrontal cortex (vmPFC).

Thank you John – Cliscep at its best. Educational and informative, impartial and clear.

LikeLiked by 1 person

Teaching kids the skills to conduct Fermi estimates would be good for them and the planet and their ability to consider and weigh up alternatives in whatever arena, not just environmental ones. Could be fun too with well-chosen examples.

LikeLike

John – a bit over my head at times, but Bravo for that post.

What I found most interesting for me was The risk aversion perspective section.

Bit O/T – I have retirement money invested in stocks & shares & they asked that question when we invested. We are not rolling in money, but have a descent amount invested. Take it financial advisers all use “Utility Theory” to ask the same question.

LikeLiked by 2 people

Helpful analysis John. Thank you!

LikeLiked by 1 person

Dfhunter,

Yes, I’m afraid decision theory can get shockingly complicated rather quickly. Not only can it get mathematically challenging, it is also mired in philosophical controversies. The Lewandowsky argument is a case in point. I am saying that in the face of Knightian uncertainty, one cannot talk of PDFs, let alone PDFs of PDFs, and so Jensen’s Inequality does not apply. The counter argument is that the inequality is a topological concept and so ordinal arguments such as Lewandowsky’s still apply, even in conditions when cardinal ones don’t. I am in a group (e.g. Keynes and Knight, et al) that rejects that counter argument, but the controversy rages on.

At the end of the day, I am calling for an end to the uncertainty wars and I advocate instead that a Robust Decision Making (RDM) approach be taken in which decisions are made that are valid for the widest range of possible futures, including but not limited to the worst case. In this approach the decisions are constantly reviewed as epistemic uncertainty is reduced. It is not a case of using convex damage curves to decide between action and inaction. The alternative to RDM is, in my opinion, ambiguity aversion spliced with the precautionary principle, and my concern is that this leads to alarmist strategies.

LikeLiked by 2 people

Dfhunter,

P.S. Financial investors, insurers and actuaries are heavily into the mathematics of risk aversion. Financial risk analysis is not something I was ever involved in professionally. It’s quite a niche area.

LikeLike

I should have mentioned that there is another approach to decision making, Info-Gap Theory, that ignores both probabilities and worst-case scenarios. Instead, a so-called Robustness Function is calculated that indicates how much error can be tolerated before the outcome becomes unacceptable. In the parlance of Info-Gap, the valid decision is the one with the greatest Horizon of Uncertainty. Info-Gap is closely associated with RDM in that they are both adaptive management strategies specifically devised to cope with significant uncertainty without resorting to the precautionary principle with its implied deference to the worst case scenario.

LikeLiked by 2 people

John – “In the parlance of Info-Gap, the valid decision is the one with the greatest Horizon of Uncertainty”.

Now you making my brain melt. Take it that means with no information to reach an informed decision you just throw a dart at the dartboard, blindfolded!!!

LikeLiked by 1 person

Dfhunter,

I think it’s just a fancy way of saying that you should want a plan that has the maximum range of potential error whilst remaining feasible. A plan that only has to go a little bit wrong before failing is not a robust plan because it has a small Horizon of Uncertainty. They make these things sound complicated but at its heart it is quite simple – don’t overcommit, look for reversible options, constantly review the situation, and look for strategies that provide you with lots of options should things start to go wrong. The UK’s net zero plans fail on all of these counts.

LikeLiked by 5 people

John, I would add one additional rule: always ensure you have the clear support of those those who are going to be most involved in implementing the plan’s objective.

LikeLike

This reminded me of Tony’s piece on Rode & Fischbeck of almost exactly 5 years ago. How time flies.

I wonder how many of the theoretical underpinnings of alarmism are actually post-hoc searches for a means to justify a position that has already been arrived at.

Thinking of my own “denialism,” I wonder also whether I have any defensible grounds for it, or whether I am as deluded as the alarmists. As I noted under Tony’s piece, if my thinking can be characterised as “proof by induction” then it potentially falls victim to misunderstanding the underlying system (as with the chicken that believes the farmer is its friend, based on induction). (Which reminds me in the inverse of how Catastrophism held sway until James Hutton put together evidence to the contrary – of course it held sway for some little time after he had realised that it was wrong. What guarantee is there that “as today, so tomorrow” holds true?)

Perhaps a follow-up “How to be a sceptic” might be in order? Do we have any justification for our beliefs that stand up to the merest scrutiny?

LikeLiked by 2 people

Jit,

Thank you for reminding me of Tony’s piece; his and mine do indeed work well as a pair. But I could say the same of any of the three related articles offered by WordPress. I was particularly struck by the following quote from your own:

If someone wants to take ‘alarmist’ as an ‘infantile smear’ and play the Orwell card, then I suppose there is nothing I can say to stop them. In fact, they are welcome to do so. I have no interest in being dragged into an argument about who is being the most infantile. I only want to talk about the technicalities of alarmism, and in doing so I use the word in a way that is technically accurate.

As for there being cause for reflection on the part of the sceptic, this is certainly the case. Some of the technicalities covered in my article cut both ways. So, one could write a ‘How to be a sceptic’ article focused upon methodological errors and cognitive biases, but I’ll leave that for others to write. An article titled ‘How to become a denier’ would also be easy to write:

Step 1 — become a sceptic

Step 2 — sit back and let your critics react by throwing the ‘denier’ insult.

LikeLiked by 2 people

John – thanks for your reply above. I should have reread your earlier comment – “Info-Gap is closely associated with RDM in that they are both adaptive management strategies”.

So your reply explanation makes sense to me now, In fact I may have been using a similar “decision theory” combination most of my life which I call “Common Sense + Look Before You Leap”. Not always successfully I must admit 😦

LikeLiked by 1 person

To further illustrate the potential role of adaptive techniques such as Robust Decision Making and Info-Gap Decision Theory as a means of avoiding the uncertainty wars, I offer the following paper:

“Robust Decision Making and Info-Gap Decision Theory for water resource system planning”

https://www.sciencedirect.com/science/article/pii/S0022169413002060

The following passage is taken from its introduction:

“Water resource systems are sensitive to climate and population changes, making supply infrastructure planning difficult. Planning models grapple with the inherent uncertainty of future conditions when the statistical distributions of future conditions are unknown or not trusted. Under such ‘Knightian’ uncertainty (Knight, 1921) uncertainty is unquantifiable and the most likely realisation of the future is unknown. In such situations methods that rely on traditional Bayesian decision analysis to characterise uncertainty using probability theory may not be appropriate (Groves and Lempert, 2007). In such situations of ‘deep’ or ‘severe’ uncertainty, recent research has argued it is more appropriate to strive for robustness (Ben-Haim, 2001, Dessai and Hulme, 2007, Lempert et al., 2006, Lempert and Collins, 2007) rather than optimality. A ‘robust’ system performs satisfactorily, or satisfices (Simon, 1959) performance criteria, over a wide range of uncertain futures rather than performing optimally over the historical period or a few scenarios.”

In short, uncertainty should not be weaponised. There is no ‘uncertainty as knowledge’ that dictates a supposedly optimal action. Uncertainty is a lack of knowledge that dictates that a satisficing approach should be taken, accommodating a range of possible futures. Such an approach offers a way of being probability blind without running straight to the precautionary principle.

LikeLiked by 1 person

In my article I explained that, according to Signal Detection Theory, an inappropriately alarmist β setting occurs when the threshold is shifted beyond what can be justified by the actual costs, benefits and likelihoods. The important point is that this would be an error of judgment resulting from common perceptual errors or strategic fallacies; it is not indicative of an individual suffering a form of psychopathology. However, to keep the article as short as possible, I did not elaborate on how such errors could come about. For anyone who is interested, I now list some of the more important ones below:

1. Underestimating the true cost of repeated false alarms

There is a tendency not to fully account for the accumulated cost of lost opportunity that arises from a ‘better safe than sorry’ approach. Also, repeated false alarms have a cost that is often overlooked. This cost may be measured in terms of alarm fatigue where the guard is ultimately dropped. A good deal of climate scepticism owes its existence to the repeated failures of alarmist climate predictions.

2. Base Rate Fallacy

A more liberal, alarmist threshold will be set if the individual believes a threat is more likely to occur than it is. The Availability Heuristic will influence this and, in that regard, it is pertinent to note that the climate change reporting does a fine job of ensuring the threats are never very far from the mind (think extreme weather event reporting). It’s a bit like how the publicity given to shark attacks results in a skewed view on how likely they are to occur.

3. Probability blindness

This can lead to an obsession with the costs of a failure to detect, combined with a complete disregard for the costs associated with ensuring detection.

4. The Affect Heuristic

Research indicates that high levels of anxiety push individuals toward a more alarmist β setting, as they are more likely to interpret ambiguous or noisy cues as threats.

5. Motivation Bias

One will tend to see what one expects to see or wants to see. In scientific research this can lead to p-hacking.

6. Perceptual Uncertainty

When there is a lot of noise and uncertainty, the tendency is to compensate by adopting a heightened state of alert. It’s a form of ambiguity aversion.

7. Institutional or Social Pressure

One will tend to see what one’s peers see. This effect is exploited in the concept of the ‘social amplification of risk’.

8. Loss aversion

This is the ‘one death is one too many’ approach. It has emotional and societal appeal but it often doesn’t make sound economic sense. It just leads to an inappropriately liberal setting for β.

9. Seeing patterns that are not there

This is covered in my article as ‘apophenia’. Another way of looking at this is Nassim Taleb’s warning of the ‘theorizing disease’. Of course, he is not referring to a literal disease of the mind.

As an interesting aside, it is behavioural insights such as the above that inform the approach taken towards encouraging compliancy with government policy. The IPCC’s AR5 WG3 Chapter 2 is full of it:

The IPCC on Risk, Part 1: New Developments? – Climate Scepticism#

LikeLiked by 2 people

Just when I was on the verge of being persuaded that “alarmist” may be as inaccurate and offensive as denier….

“Heatwaves are becoming the norm. This is what Britain will look like in the year 2052

People sleep outside because their houses are too hot to inhabit, water is scarce and supermarkets are for the wealthy”

https://www.theguardian.com/commentisfree/2026/may/26/heatwaves-britain-2052-sleep-hot-houses-water-climate

LikeLiked by 2 people

As written by Bill McGuire, the vulcanologist who believes climate change will lead to more earthquakes. This is what Gemini had to say about him when I asked if ‘alarmist’ was a fair description of him:

Bill McGuire is Professor Emeritus of Geophysical and Climate Hazards at University College London (UCL) and a prominent climate activist who embraces the label of “alarmist”. He argues that given the scale of the climate emergency, conveying the terrifying reality of the crisis is necessary to spur governments and individuals into action.

Why He is Labeled an “Alarmist”

Embracing the Term: McGuire rejects the idea that “alarmist” is a dirty word. He believes that if humanity is facing a genuine existential threat, the only logical response is to be alarmed.

Direct Communication: He has famously stated that we should “be terrified” of the worst-case climate scenarios, arguing that sugar-coating the facts leads to complacency.

Radical Action: Unlike more conservative voices in the scientific community, McGuire openly advocates for disruptive activism—such as joining climate blockades—to force governments to reduce emissions drastically.

Criticisms and the Climate Debate

His willingness to use terrifying rhetoric and advocate for civil disobedience has drawn criticism:

Criticism:

Some critics and fellow scientists argue that doomerism or excessive alarmism can cause the public to panic, shut down, or feel that the problem is too large to fix, ultimately leading to apathy.

His Defense:

McGuire counters that failing to communicate the severity of the crisis is a bigger risk, though he consistently stresses that his warnings should be used to galvanize urgent solutions, not feed despair.

LikeLiked by 2 people