One-sentence summary: the Consensus Crew believe that telling people there’s a 97% consensus about climate change leads to support for action on climate change, but the data shows that it doesn’t, as explained in a new paper by Dan Kahan.

Consensus as a gateway belief

In February 2015, a paper came out in PLOS ONE, The Scientific Consensus on Climate Change as a Gateway Belief: Experimental Evidence, by van der Linden, Leiserowitz, Feinberg and Maibach, referred to henceforth as VLFM.(Maibach is the “expert in the uses of strategic communication” who failed to foresee that his RICO letter would backfire so disastrously.)

The paper proposed a “gateway belief model”: the idea that consensus-messaging (telling people there’s a 97% consensus about climate change) increases belief in AGW and leads to “increased support for public action”. The authors claimed that their experiments, using 1104 people, provided direct evidence to support this model: “these findings provide the strongest evidence to date that public understanding of the scientific consensus is consequential.”

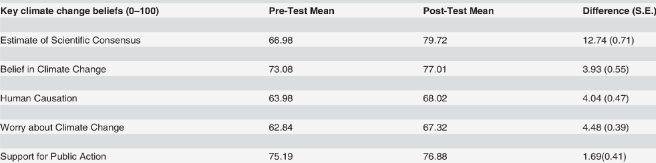

Participants were asked some questions, such as “how strongly do you believe climate change is or is not happening”, and “Do you think people should be doing more or less to reduce climate change” and asked to give an answer on a 0-100 scale. They were then asked the same questions again, after being told that there’s a scientific consensus about climate change.

There are some rather odd things about the paper. It doesn’t explain clearly what they did. It mentions a control group but doesn’t report a comparison between the test group and the control group. It claims that Republicans responded particularly well to the message, but again doesn’t report any results on this. But the strangest thing is that the effect that they report is tiny: the average reported number on the key question of support for action only went up from 75.19 to 76.88. So the reported results don’t support the main claim of the paper (see Kahan’s blog post from the time the paper came out).

PLOS ONE and its data policy

The journal PLOS ONE is not particularly highly regarded. It is an open access journal, which is generally thought to be a good thing, but the downside is that authors pay $1500 to the journal if their paper is published. This obviously gives the journal an incentive to accept papers.

However, a strength of PLOS ONE is its strong data policy — “PLOS journals require authors to make all data underlying the findings described in their manuscript fully available without restriction, with rare exception. When submitting a manuscript online, authors must provide a Data Availability Statement describing compliance with PLOS’s policy”. The policy applies to all papers submitted after March 2014, which includes the VLFM paper.

The VLFM paper quite blatantly flouts the PLOS data policy. Its Data Availability Statement is:

Data Availability: All relevant data are within the paper.

This is completely untrue. As noted above, there is very little data in the paper at all. For some of the reported claims, there is not even any summary data, let alone any raw data. The paper does have some supporting information, but that file turns out to be just another of their papers, again containing very little data.

This open contempt for the journal’s “all data…” policy was noticed by a reader who wrote to the journal requesting that the full raw data set should be made available, and in March this year it was made public as a CSV spreadsheet at figshare.

Kahan’s new paper

Dan Kahan, who runs the Cultural Cognition research project and blog, has recently written a substantial new paper re-analysing the VLFM data, and put it on the SSRN preprint server. His title is “The Strongest Evidence to Date . . .”: What the van der Linden et al. (2015) Data Actually Show. Here is the abstract:

This paper analyzes the data collected in the study featured in van der Linden, Leiserowitz, Feinberg, and Maibach (2015). VLFM report finding that a consensus message “increased” experiment subjects’ “key beliefs about climate change” and “in turn” their “support for public action” to mitigate it. However, VLFM fail to report study data essential to evaluating this claim. Subjects told that “97% of climate scientists have concluded that human-caused climate change is happening” did indeed increase their own estimates of “the percentage of scientists [who] have concluded that human-caused climate change is happening.” But the degree to which they thereafter “increased” their expressed levels of belief in global warming and support for mitigation did not vary significantly (in statistical or practical terms) from the degree to which control-group subjects, who read only “distractor” news stories, increased theirs. The median and modal changes in the 101-point scales used to measure these “increases” was in fact zero for both groups. In addition to reporting the responses of the control-group subjects, the paper corrects VLFM’s misspecified structural equation model and identifies other discrepancies between the data and VLFM’s characterizations of it, including ones relating to the impact of the experimental treatment on subjects of opposing political outlooks.

One of the oddest things about VLFM is their failure to make use of the control group. At the risk of insulting the intelligence of readers, a control group is a group of individuals who are not subjected to a treatment, who can then be compared with those who did get the treatment. In this case, the “treatment” was being told that there’s a consensus on climate change, whereas the control group weren’t told that. Kahan points out that VLFM claim to have compared the treatment group to the control group, but in fact they did not do so. Here’s the table of results from VLFM:

It’s not clear, but you’d assume that these columns of numbers refer to those who were given the “treatment”. In fact, what they did can only be worked out from the numbers in the raw data file. The first row, on the consensus, is indeed for the treatment group. But for the other rows, Belief in climate change … Support for Public Action, the treatment group and the control group were lumped together. This is absurd — I am not aware of any other paper where tests have been done for a treatment and a control, but then the two groups were lumped together rather than compared!

Kahan’s paper shows that there is very little, if any, difference between the control group and the treatment group. For both groups, the median score on belief that climate change is happening when this was asked for a second time was 86. On the support for action question, 27% of those who had been given the consensus message opted for the highest possible number, 100, but so did a slightly higher proportion, 29%, of the control group.

Kahan also criticises the “structural equation model” used by VLFM. This is a piece of circular reasoning based on their hypothesis that knowledge of a consensus feeds through into support for action, where VLFM came up with embarrassingly small effects and again neglected to make proper use of the control group.

Another curious feature of the VLFM paper is that the survey asks about political views, and the paper claims that “the consensus message had a larger influence on Republican respondents”, but no data on this is presented. In fact, this isn’t true (see figs 7 and 8 in Kahan’s paper) — the influence on support for action is equally minute for both Republicans and Democrats, the median change after consensus messaging being zero for both groups.

Here are some averages I worked out for the treatment group only on the question of support for action on climate change:

Democrats, pre-treatment: 81.8

Democrats, post-treatment: 84.3

Republicans, pre-treatment: 64.8

Republicans, post-treatment: 66.7

So the Democrats were moved by a small 2.5 points and the Republicans by an even smaller 1.9 points, the opposite of what VLFM claim. This is confirmed by the statistics in Kahan’s Table 4 that show a slight increase in the partisan divide after the consensus messaging for most of the questions. Furthermore, these numbers show that the tiny effect of the consensus messaging is completely swamped by the difference between Democrats and Republicans, which is almost ten times larger.

In summary, here’s a quote from the Kahan paper just before the Conclusion section:

VLFM (p. 6) exuberantly proclaim that “all [their] stated hypotheses were confirmed”: “increasing public perceptions of the scientific consensus causes a significant increase in the belief that climate change is (a) happening, (b) human-caused and (c) a worrisome problem,” and ultimately “increased sup-port for public action.” But if one simply looks at their data, it is hard to understand what they are so excited about.

Update 19 May:

Dan Kahan has written a detailed blog post on his new paper.

Bob Ward is fretting about the miscommunication of climate science to the public, which is ‘putting them at risk’ apparently. He advocates that free speech in the media does not extend to questioning the climate change consensus. So what is the greater risk to the public? Being ‘misinformed’ by scientific arguments which question dangerous climate change or being kept on message by autocratic propagandists like Ward whose knee-jerk reaction to losing public faith is to curtail freedom of speech?

What is laughable is that poor Bob is tearing his hair out (what’s left of it) lamenting the miscommunication of the science of climate change to the public but, in reality, his main concern is that the extent of the consensus is being miscommunicated:

“Overall, a disappointingly large amount of inaccurate and misleading information about climate change is communicated to the public by the media. The vast over-representation of viewpoints from individuals and organisations that reject the scientific consensus may largely explain why such a large proportion of the public do not realise the extent of scientific

consensus and hence do not share the conclusions of the consensus.”

Click to access 32621.pdf

What it comes down to is that the ‘science’ of global warming HAS been communicated relentlessly to the public over a period of three decades and the public has yet to be convinced by the pronouncements of experts and climate activists alike. Alas, rather than winning over increasing numbers, the ‘science’ of climate change as communicated ‘expertly’ by the likes of the Guardian, is more and more conspicuously failing to convince the public, largely because they have adopted the stupid tactic of screaming louder and louder about the supposed catastrophic consequences of failing to reduce CO2 emissions whilst providing less and less evidence to back up their claims.

So what are they left to play with? The all singing, all dancing Consensus, Cooked up in a University somewhere in Australia and reinforced very recently by the all-star CAGW cast of the Consensus on Consensus. If pushing the dodgy science isn’t working, let’s push the dodgy consensus instead – and stfu those people who dare to publicly question the consensus by legally uncategorising such written and spoken utterances as ‘free speech’. But . . . . disaster! Even consensus messaging is failing to win over the public!

LikeLike

Another positive feature of the PLOS ONE journal is that they accept comments on articles. There are 4 comments on the VLFM article, including the authors’ announcement of the release of the data and Dan Kahan’s comment about his new paper reanalyzing the data.

LikeLike

From Jaime’s post: “Overall, a disappointingly large amount of inaccurate and misleading information about climate change is communicated to the public by the media. The vast over-representation of viewpoints from individuals and organisations that reject the scientific consensus may largely explain why such a large proportion of the public do not realise the extent of scientific

consensus and hence do not share the conclusions of the consensus.”

I’d love for bobbie ward to come by and SHOW US just where all of this happened. I am not aware that Skeptics have been given much coverage by pretty much everyone, save the occasional piece in the Daily Mail or whatnot.

[PM: the only explicit example he gives is Nigel Lawson being allowed about 2 minutes on the radio in February 2014, over 2 years ago. He’s still fuming over that, while ignoring the daily diet of climate scaremongering from the BBC, Guardian etc]

LikeLike

There’s a quote from Dr Who “You can hypnotize someone to walk like a chicken or sing like Elvis, you can’t hypnotize them to death.” A consensus equivalent would be “you can guilt nudge people to tick a box or put less water in the kettle, you can’t nudge them to a 2 tonne CO2 footprint”. The consensus meme is already a spent force. It’s done all the persuading it’s going to.

“Support for public action” is a silly metric too. Many people find it hard to connect public expenditure and their own pocket. Even amongst savvy tax payers, ignorance of the true cost of cutting CO2 would lead to an over favourable support for acting. When the bills start rising the support falls away. People might even revolt at having been deluded about the true cost of action.

Oh, and Dr Lew’s at it again.

https://www.washingtonpost.com/news/energy-environment/wp/2016/05/18/climate-change-doubters-really-really-arent-going-to-like-this-study/

LikeLike

This is not a surprising result. An interesting question is then what is the motivation of seemingly intelligent people to invest so much effort in trying to document a consensus and discredit those who might disagree. Another question is why they seem to think that errors in their methodology and research practices would make the results far less credible to the public.

At some point, those very interesting in proving and/or documenting a consensus may just being trying to prove a point more than actually make a difference.

LikeLike

Particularly when the claim is BOGUS. There are two such “studies” making that claim that I know about. Neither was done by a credible survey group. The first sent out queries to 10,000+ selected respondents. However, the survey contained ambiguous questions that most any skeptic would agree with, particularly back at that time, namely “do you think human activity has some impact on the climate”…. Ever hear of Urban Heat Islands? That’s a well known scenario. The “surveyors” went on and FILTERED out most of the responses, arriving at 75 (or 77? ). Only two of those were classified as “skeptics”, thus the 97% response.

The other “study” took an automated look at climate science papers, and based on key words in the documents categorized as (1) part of the consensus” (2) not known or (3) not part of the consensus.

Again 97%. But, once again, after examination it turns out that skeptics were included in the count.

In any case 75 (or 77) respondents is hardly adequate for making such global claims

when 1,000 science based skeptics signed a petition to the UN a few years ago.

LikeLike

It is a consensus of modelers defending their assumptions.

See my http://www.cato.org/blog/climate-modeling-dominates-climate-science

Semantic analysis indicates that something like 55% of the computer

modeling that is done in all of science is done in climate science. That

is an incredible concentration, given that climate science is a small

field. In addition, we found that 97% of the research papers that mention

climate change also refer to modeling. Clearly modeling dominates the field.

Modeling is not true science; modeling is just playing with hypotheses, so

there is actually very little climate science being done. Moreover, given

that the models all assume AGW, it is no wonder that many climate

scientists endorse AGW. It is built into their work.

We need to restructure the climate research program, to refocus it on the

attribution problem: How much, if any, are humans responsible for global

warming? The models fail to address this central question.

LikeLike

Meanwhile, over at the Bonn bomb branch of the IPCC (session began on May 16 and is due to last until May 26), thanks to the IISD’s dutiful quasi-official reporting, one learns that (word-salad alert and my bold added, along with a few extra para breaks for ease of reading -hro):

Sadly, I have not yet discovered the difference between ordinary run-of-the-UNEP-mill “expert dialogue” and IPCC Chair, Lee’s “structured expert dialogue”. As for “Global stocktake”?! One cannot help but wonder who might be taking whose stock, eh?! But I digress … On this same day, one learns that (inter other inane alia):

Amazing, eh?! But what’s even more amazing (or at least equally so, IMHO) is that the word “consensus” is nowhere to be found in this entire report. Perhaps it’s in the process of being quietly replaced by the (undefined, as far as I have been able to ascertain) “global stocktake” 😉

LikeLike

Regarding Maibach and the RICO letter, Delingpole has an interesting take:

http://www.breitbart.com/environment/2016/05/15/sons-climategate-dodgy-scientists-caught-red-handed-foia-lawsuit/

In his article, Delingpole includes an FOIA e-mail pointed at Maibach’s credentials as a ‘scientist’ that was not included in the linked write-up at WUWT:

[PM: Yes, and this matters because the RICO letter written to the President said “as climate scientists we…” and was signed by Maibach.]

LikeLike

Dan Kahan has now written a detailed blog article himself about his new paper.

To try to get things back on-topic, the issue is not whether or not there’s a consensus and if so what the numerical value of that consensus is, which has been discussed ad nauseam elsewhere. The point is that all that discussion is irrelevant if, as the data indicates, telling people there’s a consensus has virtually no effect on their support for climate policies. (Maibach’s research currently focuses exclusively on how to mobilize populations to adopt behaviors and support public policies that reduce greenhouse gas emissions).

LikeLike

Much respect to Kahan for calling out scientists submitting bad science rather than blaming PLOS ONE, although he doesn’t go so far as to admit that peer review fundamentally adds very little to science. Even more kudos for doing the sums on this paper and spotting the mess.

The consensus is such a strange thing for the other side to obsess about. It’s clearly not affecting the public in any significant way. That the public often think the consensus is much less than 97% suggests several possibilities. 1) They’ve never heard the figure mentioned, which would mean that climate change as an issue was dead to them. 2) They don’t believe the figure, which means that it’s not just climate scientists that have a credibility problem. 3) They are downgrading the figure for some reason. Number 3 might be for several reasons. Firstly, there is no defined consensus. Survey takers don’t ask ‘what percentage of scientists think there has been any warming due to man?’ Too often the consensus is supposed to be CAGW, about which scientists are much more at odds. If you thought the question hinted at the more serious definition, would you still give 97% or would you adjust it for the number that support the more extreme versions? Might you even adjust it for those you think are activists and thus biased by their beliefs? And of course if you’ve lost trust in the scientists you might agree that the consensus was 97% but not give a damn about that figure.

But warmists love the consensus because it’s one of the few things they can deliver that might be easily understood. They themselves might be just relying on the consensus to be right, because they too can’t understand the science. The playbook of warmist arguments is pathetically short:-

Consensus.

Bad weather, any bad weather – hot, cold, wet or dry.

Polar bears dying from any cause.

Photoshopped picture of any city flooded to the second or third floor.

Warming since the LIA, but don’t go into detail because it would just confuse things.

We have emitted CO2 or is it CO or pollution or… oh just stick up a picture of some cooling towers.

Nasty old oil companies oppose warmists, cigarette merchants of doubt blah, blah, blah.

Won’t somebody think of the poor, get them solar panels, stat!

Deniers are stinky idiots who shouldn’t be allowed sharp pencils or raw data.

Err… that’s about it.

LikeLike

I’ve added a couple of comments to Dan’s blog post.

http://www.culturalcognition.net/blog/2016/5/19/serious-problems-with-the-strongest-evidence-to-date-on-cons.html?lastPage=true&postSubmitted=true

LikeLike

Mr Physics has blogged on this too. He admits that

“There are indications that Dan Kahan may well have found a genuine issue with this paper.”

LikeLike

ATTP/SkS – they have fallen into a trap – because sceptics criticise 97% messaging = it must be good..

they can’t seem to grasp, we might criticize because it’s crap –

Dan’s paper, and comment at Plos One (if I read between the academic lines/tone criticism), is almost calling for a retraction?

Dana (and SkS team) has this mentality because we criticise it must be because sceptics think it is working (see his comments the leaked SkS forum)

But, Dan’s criticism of messaging, etc must totally confuse them, because he is not a sceptic, so they need to try to rationalise why.

Now normally if your opponent is making a mistake, don’t interfere, let him carry on..

But In Dana/SkS world, we know the more we criticies, the more they will continue to use it!

Same was observed when Horoshima messaging was criticised, even when climate sceintist criticised the messaging (ie they are also members of the public, who understand the linkag to catsatrophe messaging), SkS could not/would not listen (because they, Dana, John, Lew), not Doug Mcneall, or Warren were the communications experts ! LOL because they think it is ‘sticky’, well it certainly was sticky, but in a totally why are you trying to manipulate me way, with crude offensive analogies.

And If we don’t criticize 97% the more they will continue to use it (because they think they will have won) and they will remain totally confused why it isn’t working.

LOL

Do the not see, it just comes across as advertising to the general public 8 out of 1o cats prefer whiskas, or hair products, etc ) and the public just let the noise wash over them now, after decades of this type of consensus [advertising] messaging for products they are being sold

LikeLike

now the Luntz memo has been brought up at ATTP

https://andthentheresphysics.wordpress.com/2016/05/19/consensus-messaging-again/#comment-79505

“Perhaps the most famous part of that research is the Frank Luntz memo advocating the promotion of doubt about the science at all levels.” – Izen

my response, this will not appear at ATTP (so here it is)

the Luntz memo – has anyone here actually read it..

Luntz was trying to find why republicans were doing badly on environment/climate issues with their voters. He found in focus groups that if they tried to promote action on the environment with a consensus / science settled message – their target voter groups were resistant to it. saying science is not settled.

and he recommended acknowledging doubt and uncertainty to build trust with those groups so that they would get involved on environment issues!

Which is pretty much what climate scientist have been doing recently, acknowledging uncertainty, etc, in an attempt to gain trust..as the science settled messaging was just not working

so the whole idea that Luntz was advocating doubt to forestall climate action is utterly twisted/opposite of reality

the climate activist has utterly misunderstood the Luntz memo, to create this fiction that fits into their worldview that Republicans are evil

try reading the whole memo.

Click to access luntzresearch_environment.pdf

Shub took a look a while back:

https://nigguraths.wordpress.com/2013/03/19/the-story-of-the-frank-luntz-memo/

“What you’ll see, contrary to widespread rumour, is a document that is primarily concerned with strategies to connect to a majority whose views, as discovered by Luntz, were already favourably disposed toward the climate cause and the environment.

That’s right. Its about what Luntz found existing in the public, and his ideas for appealing to such an audience. The same thing has been turned on its head and blamed on him for having caused to come into existence, and nurtured to full growth.

For instance, Luntz found via his focus group methods that members of the American public believed “there [was] no consensus about global warming in the scientific community”. His recommendation, arising from what he found, was to make this lack of consensus an issue and defer to science, in order to win over such individuals.

The second famous item, the rebranding of ‘global warming’ into ‘climate change’, is even better. In the memo, Luntz is seen providing the insight that ‘environmentalism’ and ‘global warming’ invoke images of extremism and alarmist dogma, both of which turn off neutral voters who found ‘climate change’ more palatable.

How have the above been turned on their heads? Luntz is directly blamed for (i) trying to induce the American public to think there was no consensus about global warming. and (ii) attempts to rebrand ‘global warming’ to detract from its urgency. Quite the opposite of what he set out to do.

Consider how the environmental movement has used the Luntz memo. Firstly, it paints a picture of a nefarious conspiracy hatched by ‘industry’ to lull the population into sleep using clever slogans. Such characterization neuters Republican party attempts to gain a foothold in the climate debate arena. Secondly, it fixes blame for creating something he just found already existed. Lastly, even as it slams Luntz, it has borrowed and implemented ideas present in his report to advance its own cause.

Consider what has happened since the memo was written. Per Luntz’s focus group findings, a majority of the American public ‘believed in global warming, believed that humans were likely causing it, were not interesting in the science, but interested in positive solutions to the problem, wanted jobs, end to dependence on foreign oil, and a shutdown of outsourcing’. Correspondingly he came up with a number of words and phrases to be used by any political party to advance its case.

Last heard, he had just finished work for the pressure group Environmental Defense Fund (EDF).

Postscript: Luntz is just another pollster who has consistently worked toward ending real debate over climate issues. His advice on environmental issues in the Luntz memo is virtually indistinguishable from what is in the current US administration playbook.”

LikeLike

Paul,

You have done an excellent job in going through both the VLFM paper and the Kahan reply. From an academic perspective it is rubbish, just as the Cook et al. 2013 and the Lewandowsky Moon Hoax papers are rubbish. It should be a huge embarrassment to academia. But the underlying data indicates why we will get more of this nonsense.

There were 1104 respondents, split between the 989 who underwent the “Consensus Treatment” (the authors’ term, not mine) and 115 in the Control Group. After undergoing treatment, there was an average increase in appreciation of a scientific consensus by 13 points, but only a 4.5 points increase in the belief that climate change is human-caused and less than a two point increase in the support for action. However, 75% of the respondents already supported action before undergoing treatment, maybe because the respondents were not asked to rank action against other priorities, or informed that on a global scale total mitigation policies will make very little difference. But the public can be sold the notion of consensus, then misconstrue this as an expert consensus on impending catastrophic global warming along with having knowledge of effective policy remedies. This will help nullify opposition to a major area of public policy being taken out of the democratic arena and left to a bunch of political activists.

LikeLiked by 1 person

Yes, Paul good job. I would take the Ken Rice post (which is very out of character with his usual dishonest and insulting style) as an acknowledgment of partial error. Better take a screen shot for documentation.

LikeLike

David, in fact he has two posts on this. In the first one he seems unsure but in the second, TBH, I don’t really like consensus messaging either, he acknowledges “I might have to give Dan Kahan some credit”. He seems to have been influenced by some climate scientists

It’s an interesting admission given that he’s an author on the latest consensus paper which states “Public perception of the scientific consensus has been found to be a gateway belief, affecting other climate beliefs and attitudes including policy support”, citing VLFM and others.

I’m banned from commenting there but have “liked” his blog post!

But both posts are based on a misreading of Kahan’s paper (“As far as I can tell Dan Kahan particularly dislikes consensus messaging”). It’s nothing to do with whether he “likes” consensus messaging or not. It’s about (there’s a clue in the title) what the data actually show. Kahan even includes a paragraph to make it clear that he’s got nothing against consensus messaging:

LikeLike

Against consensus messaging.

LikeLike

You missed out the previous paragraph explaining what he’s against:

LikeLike

Paul,

So, he’s not against it, but is against it? For a supposed expert on science communication, he presents a rather confused message.

I’ve also tried to find out what he is referring to with respect to the “social marketing campaign” on which hundreds of millions of dollars have already been spent. I’ve been unsuccessful so far. Maybe you know.

LikeLike

There are 2 different “it”s, as explained in those paragraphs.

LikeLike

Quite possibly, but for an expert in science communication, he does seem to be doing a poor job of making clear which “it” he’s against, and which one he isn’t. In fact, most consensus messaging I’ve come across is not in the USA and hasn’t been funded by some social marketing campaign, so maybe I should assume that he isn’t against that, but is against something else that may not even really exist. I discovered that his social marketing campaign refers to Al Gore’s Alliance for Climate Protection and (although this isn’t clear) may have been spent promoting An Inconvenient Truth. Not only was the only consensus study at that stage Oreskes (2004), I don’t think (I haven’t seen it) that An Inconvenient Truth was based on consensus messaging alone, and according to this the organisation’s revenues in 2011 was $19 million and in 2013 was $6 million. Seems a bit of a stretch to imagine that they somehow had $300 million to spend on consensus messaging, when that would account for something like 30 years of what seems to be their typical annual revenues.

LikeLike

He makes it very clear what he’s against and what he’s not against in the first few paragraphs of that blog post.

LikeLiked by 1 person

I very much got the impression that he’s against sloppy work, presenting results that the data doesn’t support or even contradicts. Just because people criticise how something was done, doesn’t mean they criticise why you tried to do it.

LikeLiked by 1 person

Quoting from Kahan’s “against consensus messaging” blog:

http://www.culturalcognition.net/blog/2015/6/10/against-consensus-messaging.html

“These meticulous researchers are hedged: no matter what happens, they will have predicted it!

Here, though, is some evidence on whether those who “don’t believe” in climate change trust climate scientists.

Leaving partisanship aside, farmers are probably the most skeptical segment of the US population. But they are also the segment that makes the greatest use of climate science in their practical decision making.

The same ones who say they don’t think climate change has been “scientifically proven” are already busily adapting—self-consciously so—to climate change by adopting practices like no-till farming.

They also anticipate buying more crop-failure insurance. Which is why Monsanto, which is pretty good at figuring out what farmers believe, recently acquired an insurance operation.

Because Monsanto knows how farmers really feel about climate scientists, it also recently acquired a firm that specializes in synthesizing government and university climate-science data for the purpose of issuing made-to-order forecasts tailored to users’ locations. It expects the consumption of this fine-grained, local forecasting data to be a $20 billion market. Because farmers, you see, really really really want to know what climate scientists think is going to happen.”

Kahan (and many others) possess a mistaken concept about what constitutes the expertise of the current crop of ‘climate scientists’. The latter specialize in climate models and PREDICTIONS. In contrast, it is meteorologists who are the masters of weather models, weather history and most importantly FORECASTING. Farmers (and insurance companies and commodity traders) are not interested in predictions…they want and need and invest in forecasts. In the real world of agro-economics, it’s forecasting that counts; predictions and consensus only matter in the realm of politics.

I reside in Texas, and, as a subscriber to WeatherBell, I have been entertained lately with the commentary by the meteorologists who have been forecasting for many months the current heavy rains and flooding in Texas versus the prominent climate scientists at Texas Tech and Texas A&M Universities who have been predicting mega-droughts. The former survive on commercial and individual subscriptions, whereas the latter thrive on government (political) grants.

LikeLiked by 1 person

Returning to Maibach, it seems that he is in deep doodoo and has hired an attorney:

https://wattsupwiththat.com/2016/05/23/cei-fires-back-at-ed-maibach-over-emergency-stay-of-rico20-foia-documents-looks-like-hes-toast/

LikeLiked by 1 person

I’m not convinced. I still rather suspect Gore knew what he was doing when he cited Oreskes04 in his Nobel-Prize-winning infomercial.

Surely it remains possible to contend that consensus messaging *did* work—that it made the joke that is The Science sound credible to hundreds of millions of people who were too scientifically illiterate to understand that consensus messaging is an inherently fraudulent move in science.

It doesn’t follow, of course, that *more* consensus messaging will work *more.* Contrapositively, what does it really prove to point out that exposing people to it for the millionth time in a laboratory environment fails to increase their acceptance of The Science?

Let’s find an uncontacted tribe in the Amazon and see if it doesn’t work on naïve subjects.

LikeLiked by 1 person

There have been a few cartoons about the olive oil romp – one is a picture of a Martian saying to a spaceman ‘If you’ve come to tell us who’s the subject of the injunction, we already know’. The other cartoon is two goldfish in a bowl and one is saying ‘I keep forgetting who it is I’m not supposed to talk about’.

Consensus messaging is most effective when it says what people want to believe. The more expensive the belief, the more evidence people need and the less effective the consensus becomes. So you might be happy to accept the science that there is life in space but you’d want a lot more proof to agree to spend 50% of GDP on space defence. Surely this concept is basic psychology? One of the big differences between sceptics and warmists is that we can see the cost and are experienced in asking difficult questions before we blithely agree to that blank cheque they’re asking for.

LikeLike

If by ‘sceptics’ you mean people who claim to be sceptical of the science of AGW, any consideration of ‘cost’ is irrelevant. It only becomes relevant when talking of preventing AGW or adapting to its effects.

LikeLike

Raff, the ‘science’ of AGW is not settled. The IPCC posits a range of equilibrium climate sensitivity from 1.5-4.5C and they cannot even give a best estimate within that range. TCR (arguably more policy relevant) is guessed at between 1C-2.5C; the transient response to cumulative emissions anywhere between 0.8C-2.5C. Climate change sceptics, it turns out, are largely sceptical of the ‘science’ which says that we should be very worried about ‘dangerous’ global warming, i.e. high climate sensitivity. It is a natural progression from there to also question the cost and effectiveness of mitigating a ‘problem’ which might not be [a problem]! Preventing AGW of ECS=1.5C & TCR=1.0C is going to be a whole lot easier than trying to prevent AGW of ECS=4.5C & TCR=2.5C. Indeed, in the former case, ‘prevention’ is probably not even necessary, requiring only adaptation to modest temp increases and moderate rising seas. So a scenario which AR5 WG1 considers in its ‘likely’ range would make a complete mockery of all those subsidy-sucking solar panels and hideous, bird-killing, bat-killing turbines which have been thrown up to date and are costing the taxpayer and energy customer very dear. The reason they have been thrown up? The precautionary principle, i.e. we cannot afford to simply dismiss the more catastrophic global warming projections. ‘Sceptics’ are generally sceptical of the flimsy scientific evidence which is being put forward to suggest that the probability of these more catastrophic scenarios happening is significant. Sceptics are also sceptical of those people who say that they are merely acting or promoting action based on the ‘science’ of CAGW when in fact they are pursuing a political agenda – and there are plenty of those in the climate alarmist camp.

LikeLike

Cost doesn’t affect truth, but it does raise the bar for evidence. Would you expect a court case to have the same length of trial for a shop lifter as a mass murderer?

LikeLike

BRAD KEYES @ 24 May 16 at 1:51 pm

Could I disagree with you? To assume that (Former) VP Gore knew what he was doing when he cited Oreskes04 in his Nobel-Prize-winning infomercial assumes the he has a greater understanding than he portrays, but chooses to knowingly something else, knowing it to be false. The alternative view is that, as a politician, Al Gore gives precedence to appreciative (but baseless) opinions that inflate his ego, over real world facts and empirically supported scientific hypotheses that contradict his beliefs and might

puncture that egochallenge his world view. Like with the Climate Bayesians, evaluation is based upon established (or consensus) opinions rather than inconvenient facts. Al Gore is not like Al Capone of the “Untouchables” film, who kept a secret set of books whilst presenting a picture of poverty to the tax authorities. Rather former VP Gore believes all the rhetoric, as that is the alternative reality he inhabits.LikeLiked by 2 people

Manic,

You may think you disagree with me, but I don’t think I disagree with you.

It’s often the case that when a skilful actor like Al Gore wants to tell someone a lie, he first lies to himself, and believes it, so that he can then repeat it with absolute sincerity and conviction.

So the hypothesis that he believes his own schtick isn’t incompatible with the hypothesis that he’s being dishonest. The synthesis, as it were, is that he’s still lying—but not to the audience.

LikeLike

“he first lies to himself, and believes it”

That is the key to why journalists, politicians and celebs are driving this and not scientists. The scientists get bogged down in the truth and the public rightly work out that it’s not as clear cut as they want us to believe. It’s only just coming home to them that they have to live up to the hype the comentators create for them.

LikeLiked by 1 person

Sorry—to be clear, when I averred that

>>I’m not convinced.

I merely meant that I have my doubts about the titular claim that “Consensus messaging doesn’t work.”

The body of the post, however, is very convincing in establishing that the VLFM paper isn’t worth the, uh, paper it’s electronically not printed on.

I still suspect, even though VLFM does absolutely nothing to confirm this and arguably was incapable of confirming it by design, that consensus messaging DOES have an effect the first hundred or so times you subject the average non-scientist to it. As I said, though, we’d probably have to go deep into the Amazon to really test this hypothesis.

LikeLike